This guide gets you through your first Kong analysis as fast as possible. Five steps, five minutes.Documentation Index

Fetch the complete documentation index at: https://docs.kong.fyi/llms.txt

Use this file to discover all available pages before exploring further.

Install Kong

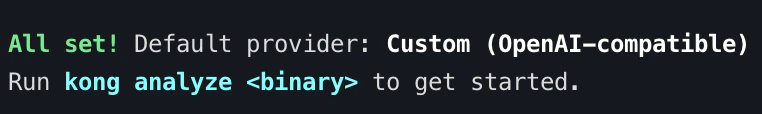

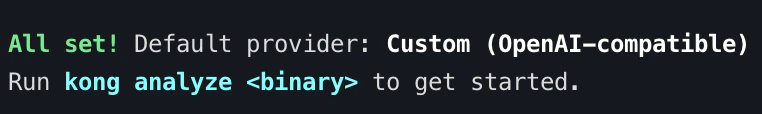

Run the setup wizard

The setup wizard configures which LLM providers to use and verifies your environment:It will detect your Ghidra installation, check your API keys, and save your preferences. This only needs to run once.

Analyze a binary

Point Kong at any stripped binary:Kong will load the binary into an in-process Ghidra instance and run the full pipeline: triage, analysis, cleanup, synthesis, and export. You will see a live TUI showing progress as functions are analyzed. You can also specify a provider or model explicitly:

You can also specify a provider or model explicitly:

Review the output

When analysis completes, Kong writes results to Open

./kong_output_{binary_name}/:analysis.json to see every recovered function with its name, return type, parameters, and a confidence indicator. Kong also writes everything back to Ghidra’s program database, so you can open the binary in Ghidra and see real names instead of FUN_ labels.What Just Happened?

Behind the scenes, Kong ran a five-phase pipeline:- Triage enumerated all functions, classified them by complexity, built the call graph, and matched known library signatures

- Analysis processed functions bottom-up from the call graph, building rich context windows for each LLM call

- Cleanup normalized results and unified struct proposals

- Synthesis took a global view across all functions to unify naming conventions

- Export wrote

analysis.jsonand applied everything back to Ghidra

Next Steps

- Understand the output — See Output Formats for a detailed breakdown of

analysis.json - Try different providers — Configure multiple LLM providers with the Setup Wizard

- See it in action — Read the XZ Backdoor case study to see what Kong recovers from a real-world binary

- Go deeper — Learn how Call-Graph Analysis orders functions for maximum context